Scaling personalised support: LLMs and human empowerment

What impact will LLMs and generative AI have on society? Polarised narratives have emerged: alarmism on the one hand, utopian solutionism on the other. The reality, as always, is nuanced. As AI research maintains its breakneck pace, we take the optimistic view that there’s a generational opportunity to enrich people’s lives at mass scale.

We’ve recently shared our investing framework for generative AI applications. Of the 500+ startups we’re tracking, many are using large transformer models to generate or interpret material that touches the most critical areas of users’ lives: their health, their education, and their social interactions.

The problem: a workforce that doesn’t scale

As a society, we need these services to succeed. Even in the affluent West, we grapple with a structural undersupply of key workers: nurses and clinicians; teachers and tutors. The European Commission estimates a shortage of nearly 1 million health workers across the continent. Last year, the UK saw its largest recorded exodus of teachers from the profession, and the situation is similar in the US. Collectively, we are looking at a society where highly personalised support is becoming an increasingly scarce resource, afforded only to the privileged few. Solving this problem is a social imperative – and also a vast commercial opportunity that will create new revenue opportunities – whether from individuals, private providers, and the public sector.

The solution: personalised services that do scale

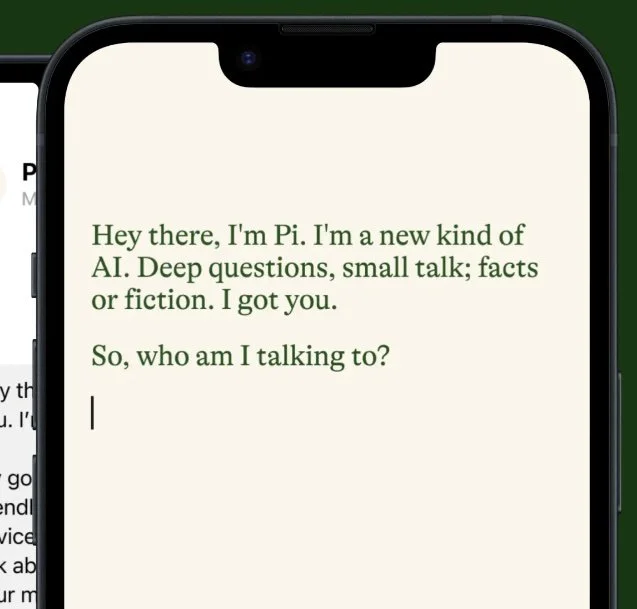

If there can be a silver bullet, it is to offer digital services that are tailored to the needs of the individual, yet delivered in a scalable and low-cost way. We’ve laid out our product thesis for such an application in education – and have since made an investment in a personal tutor, about which you’ll hear more soon. Similar concepts are emerging in other categories. With Inflection, we’ve backed a personal companion that seeks to inspire, inform, and perhaps help to combat the loneliness epidemic. In healthcare, we’re excited about patient-facing diagnostic and therapeutic applications – where LLMs could offer a naturalistic, empathetic interaction without needing the scarce resource of a human practitioner’s time. Indeed, we’ve been backing digital therapeutics since our investment in Clue back in 2015. We’re equally excited by practitioner-facing decision support tools that can automate basic work, and enable them to focus on the most critical interactions. With regard to the future of work, we’re backing platforms that give the workforce precisely the tools they need to achieve their full potential.

LLMs and generative AI will power all of these applications – but what does it take to deploy them in a useful, responsible, and defensible way?

LLMs alone are not the answer

The limitations of LLMs are well documented. GPT-4 alone cannot carry out patient diagnoses to an acceptable threshold of accuracy. LLMs are not reasoning engines, so they can’t reliably tell your kids whether they solved a math problem correctly. They are stochastic by nature, so their responses to the same prompt may vary. They may hallucinate; they tend to be black boxes; and, if deployed alone, lack the domain-specific training that is often needed to give fine-grained responses. This clearly isn’t viable in sensitive, safety-critical settings – clinics, classrooms, courtrooms, and so on.

Regulation is inevitable, and the EU’s AI Act will set global precedents and standards – as it has done with GDPR and many others. And if we let ourselves dream of AGI, the problem of alignment will only become more acute.

An architecture for safe, defensible AI applications

Defensible AI-first companies will need to be much more than interfaces to foundation models. We are now seeing a common architecture in many verticals, where LLMs are prompted and guided by proprietary, domain-specific model(s). The latter makes the sensitive decisions and carries out an assurance / validation role – while the former generates personalised content that is constrained by the bounds of the proprietary model(s).

The true IP, and hence the focus of our diligence, is in the proprietary models that the company has built. Can they be proven to deliver human-equivalent performance, or better? Are they trained on data that others cannot access, that improves over time? Have they passed a threshold of regulatory approval and user acceptance, so that we can anticipate mass adoption? If all of this is true, and an LLM is used to create a naturalistic interaction with the end customer, we’re likely to be excited.

This is the approach taken by the most compelling student- and patient-facing generative AI startups we’ve seen. It is defensible and explainable by design, while letting the LLM do what it does best – i.e. generate naturalistic responses that allow the user to receive a human-like interaction.

We have seen teams taking alternative approaches – i.e. building a new domain-specific foundational language model from scratch for a given use case. This is less likely to be a fit for us: it is a highly capital-intensive approach, and risks limiting future innovation by precluding the use of whatever is the best foundation model on the market.

The long-term bet: AI enables better outcomes for individuals and society

Within this broad category of ‘human empowerment’ are healthcare and education: two vast industries that have often resisted disruption, with complex and idiosyncratic value chains of private and public entities, whose incentives do not always match. In each, there’s a clear need for services that scale, and LLMs may well unlock this.

There is a complex question of who pays for these new applications – and the answer will vary by specific use case, ranging all the way from ads and consumer subscriptions to reimbursement by government health systems. Some customers – e.g. single-payer health systems and state education systems – are notoriously difficult to sell to at scale, but can unlock a large and differentiated set of user data. In general, we’re most excited by areas where there is immediate benefit to the user, and proven evidence of willingness to pay out-of-pocket.

We’re in the early stages of navigating the software markets that will result. We hypothesise that a domain-specific control layer is needed for most sensitive applications, and this will be the moat that defends most of the companies in which we invest. Independent actors will need to provide regulatory oversight, and this stands to be a venture-scale compliance market in its own right.

We’re excited to meet the entrepreneurs who are giving shape to this market, and building the applications that will deliver better outcomes for the next generation. If you're one of them, please get in touch.